The future of inclusive hiring: balancing AI with human judgement

At our recent Belong Amsterdam panel, we brought together HR leaders and talent specialists to explore a question many organisations are grappling with: can AI tools genuinely support inclusive hiring, or are we unintentionally hard‑coding existing biases into the system?

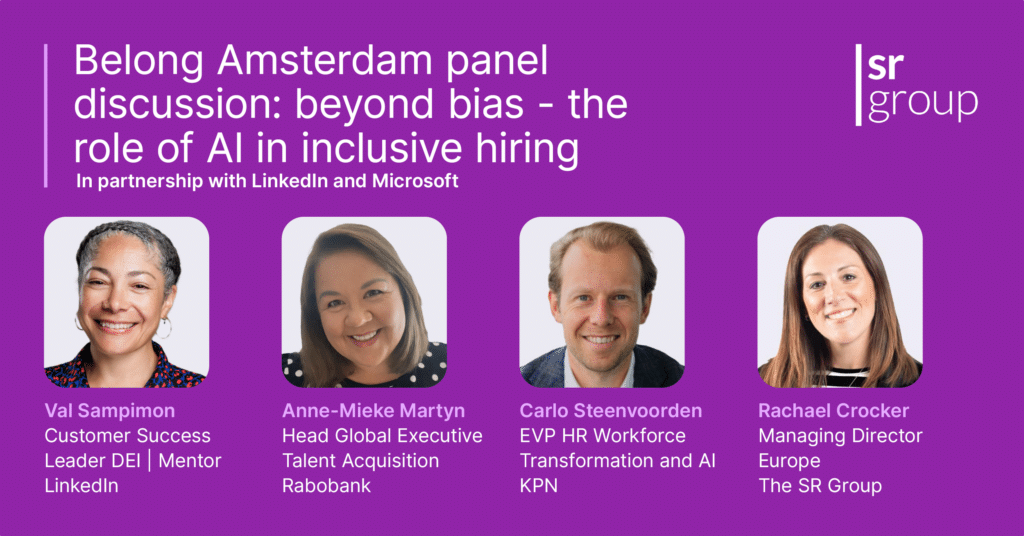

The event was moderated by Rachael Crocker, Managing Director for Europe at The SR Group, and supported by partners at LinkedIn and Microsoft. The discussion centred on practical, real‑world experience rather than the hype that often surrounds AI-powered recruitment and automated elements of the modern hiring process.

Our panellists were:

- Val Sampimon, Customer Success Leader, LinkedIn

- Anne-Mieke Martyn, Head Global Executive Talent Acquisition, Rabobank

- Carlo Steenvoorden, EVP HR Workforce Transformation, People Services, People Analytics and AI, KPN

They shared how they’re experimenting with AI systems, learning from mistakes and rethinking their hiring practices as they adopt new recruitment tools and AI models.

Seeing bias for what it is

The session opened with a simple visualisation exercise: imagining a pilot, a couple celebrating an anniversary and a doctor appearing at your bedside. Most of us unknowingly pictured stereotypical images – a clear example of unconscious bias.

It was a grounding reminder that bias influences decision-making, from sourcing to reviewing resumes to interpreting competencies. As Valerie highlighted, artificial intelligence can easily “amplify bias if not checked,” particularly when trained on historical data and large datasets that reflect old patterns.

This is why many HR teams are introducing audits, building clearer metrics, and reviewing algorithms used in AI hiring to ensure outcomes align with organisational values.

Where AI is moving the dial

Anne‑Mieke shared how AI-driven search is already helping Rabobank challenge long‑entrenched patterns in executive hiring. Traditional shortlists tended to surface similar profiles, but by running AI‑led “shadow searches,” her team quickly accessed broader talent pools, including more diverse candidates and under‑represented groups.

The technology doesn’t remove bias entirely, but it does support more data‑driven and inclusive recruitment by elevating skills and competencies often overlooked in manual processes. It also helps hiring managers widen their perspective on what leadership potential looks like in a diverse workforce.

Val echoed this from the platform side. LinkedIn’s agentic tools help recruiters streamline admin, review job descriptions, question the role of AI in selection steps and ensure human oversight remains central to hiring decisions. Rather than replacing teams, this automation frees them to focus on candidate experience, cultural signals and genuine engagement.

Why literacy matters more than tools

Carlo emphasised that before adopting any AI‑driven solutions at KPN, he paused implementation to build AI literacy. Managers spent time understanding how machine learning works, how training data shapes outcomes and where AI algorithms might unintentionally reinforce inequalities.

This foundation now allows teams to challenge vendors, understand data privacy implications and design a more responsible recruitment strategy. It also helps HR teams think critically about how to optimise the recruitment process, from sourcing and screening to onboarding and early career development.

Human oversight still makes the difference

Across the panel, one message was consistent: even with sophisticated AI recruitment tools, human judgement still determines whether outcomes feel fair, contextual and aligned with company culture.

Hallucinated profiles, overly polished answers and opaque hiring data demonstrate why inclusive hiring practices require people to monitor, question and refine results produced by AI systems. Hiring managers play an essential role in interpreting context, understanding cultural fit, and ensuring that automation supports rather than undermines inclusive workplaces.

A future built on balance

As Rachael closed the session, the room agreed: there is no single blueprint for adopting AI in the hiring process. But by combining experimentation, transparency and thoughtful use of AI, organisations can design more equitable hiring practices that widen access, reduce bias and connect diverse talent with meaningful opportunities.

The Belong Amsterdam Network exists precisely for these conversations – helping HR leaders explore how talent acquisition, ethics and technology can work together to create fairer, more inclusive recruitment for the future.